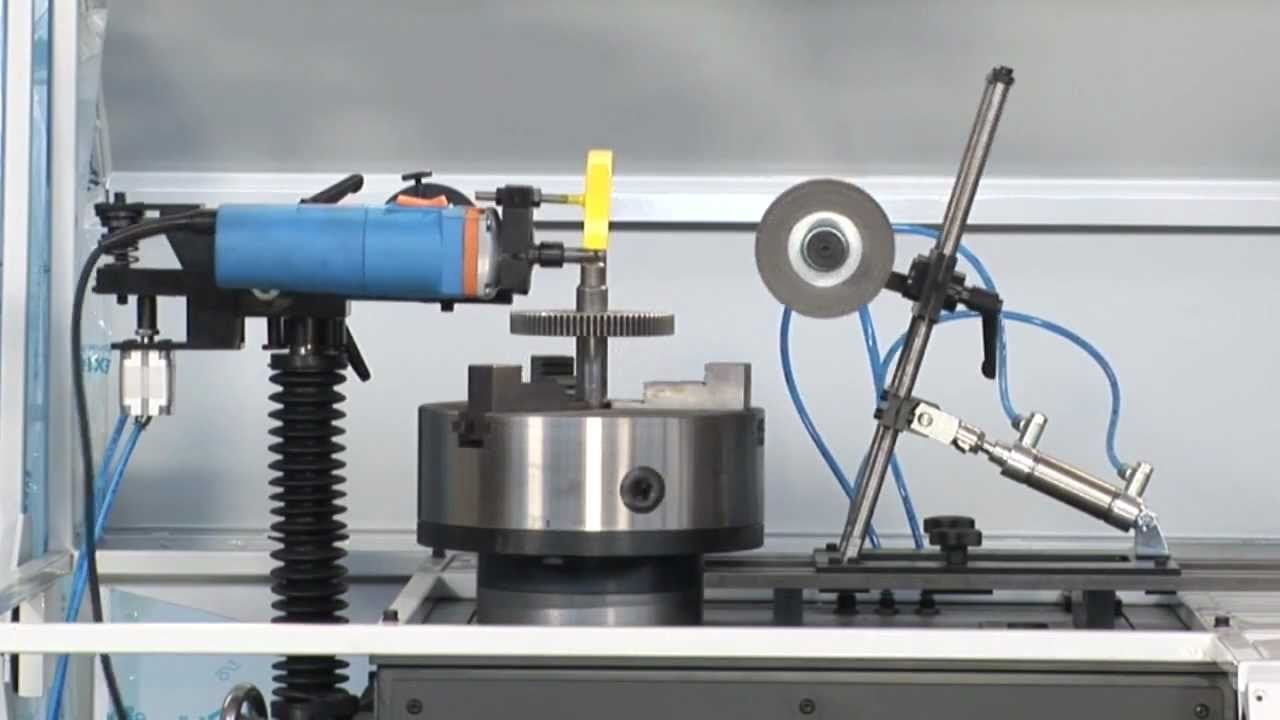

GEAR DE-BURRING MACHINE

In Business, Official BlogGear deburring is a process that has changed substantially over the past 10 years. There have been advancements in the types of tools used for deburring operations and the development of “wet” machines, automatic load and unload, automatic part transfer and turnover, and vision systems for part identification, etc.

Three types of tools are used in the gear deburring process, including grinding wheels, brushes, and carbide tools. A discussion of each method is as follows.

Grinding Wheels

There are many wheel grits available, from 320 grit for small burrs and light chamfers, to 57 grit for large burrs and heavy chamfers, with numerous grit sizes in between. Grinding wheels will usually provide the required cosmetic appearance for a deburred gear. Setting up the grinding wheel is critical for good wheel life and consistent chamfers. The point of contact for the grinding wheel should be equal to the approach angle of the grinding head. For example, set a 45 approach angle for the grinding head with a protractor. Next, draw a line through the center of the grinding wheel followed by a line drawn 45 to the first line. The contact point between the gear and the grinding wheel should be at the 45 line.

The size of the chamfer attainable is determined by the size of the burr to be removed from the part. Further, three additional factors that affect chamfer size are wheel grit size, the speed of the work spindle, and the amount of pressure applied to the part by the grinding wheel. Grinding wheel speed is noted on the grinding wheel, and it is usually 15,000 to 18,000 RPM. The grinding wheels used most often are aluminum oxide.

Brushes

Parts with small burrs can be effectively deburred with a brush. Two types of brushes are used for deburring operations, those being wire and nylon. Wire brushes are made with straight, crimped, or knotted bristles. The wire diameter and length will determine how aggressively the brush will deburr. Nylon brushes can be impregnated with either aluminum oxide or silicon carbide, with grit size ranging from 80 to 400. The specific application will determine which type of brush is required. In applications where a heavy burr is to be removed with a grinding wheel or carbide tool, a brush is often used as a secondary process for removing small burrs created by the first process.

Carbide Tools

The use of carbide deburring tools is a relatively new development. There are three advantages to using carbide tools:

? Reduced deburring time. The carbide tools can run at 40,000 RPM, vs. 15,000 to 18,000 RPM for grinding wheels.

? Reduced setup time, because there is no need to establish an approach angle as with a grinding wheel.

? Ability to deburr cluster gears, or gears having the root of the tooth close to the gear shaft or hub.

Deburring Machine Features

The deburring process is accomplished with floating-style deburring heads having variable RPM air motors or turbines. The floating heads have air-operated, adjustable counterweights for adjusting the pressure applied to the part being deburred.

The floating heads can use grinding wheels, brushes, or carbide tools, and change-over from one to the other can be accomplished in a matter of minutes, providing versatility for doing a number of different parts on one machine.

ADVANTAGES:

1. Quick action clamping.

2. Precise indexing.

3. Multi-module indexer makes all range of spur gear de-burring possible

4. Fast action de-burring due to the sequential operation of the grinding head and indexer mechanism

5. Low-cost automation.

6. The flexibility of circuit design / can be converted into the fully automatic mode with minimal circuit components.

7. Low-cost automation process

8. Saves labor cost and monotony of operation.

APPLICATIONS:

1. Machine tool manufacturing industry.

2. Agriculture machinery manufacturing.

3. Molded gear industry.

4. Timer pulley manufacturing.

5. Sprocket and chain wheel manufacturing ..etc.